Voice AI at Scale: What OpenAI's Architecture Tells Builders

OpenAI's low-latency voice AI stack reveals the real engineering tradeoffs every builder faces when shipping real-time AI products.

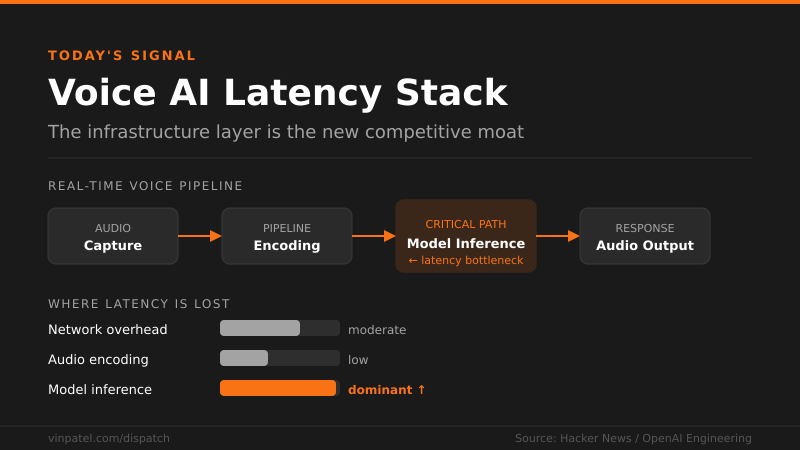

The signal: OpenAI published details on how they deliver low-latency voice AI at scale — and the engineering tradeoffs are a masterclass in real-time AI infrastructure.

Why it matters: If you’re building any product with voice, streaming, or real-time AI responses, the bottlenecks they’re solving — model inference latency, connection overhead, audio pipeline optimization — are the exact walls you’ll hit. This isn’t theory; it’s a blueprint from the team running it at the hardest possible scale.

The pattern I’m watching: Every serious AI product is converging on real-time interaction as the bar for “good enough.” The companies that figure out the latency stack first — not just the model quality — are the ones that win on user experience. Infrastructure is the new moat, and it’s being built right now.

What I’d do with this: Read the architecture details as a checklist against your own stack — where are you leaning on OpenAI’s API latency instead of optimizing your own pipeline? If voice or real-time AI is on your roadmap in the next 12 months, start prototyping the infrastructure layer now, not after you’ve promised a demo.

Get the daily signal in your inbox