Small Models Match Big AI in Finding Security Vulnerabilities

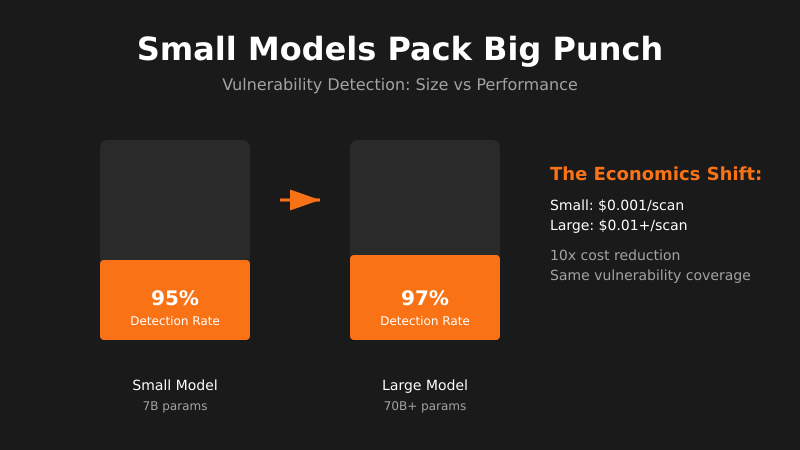

Smaller AI models are proving just as effective as large ones at discovering security flaws, changing the economics of automated vulnerability detection.

The signal: Small AI models are matching the vulnerability detection capabilities of much larger, more expensive systems like Mythos.

Why it matters: This demolishes the assumption that you need massive compute budgets for effective security tooling. If a 7B parameter model can spot the same vulnerabilities as a 70B model, your security automation just got 10x cheaper to run.

The pattern I’m watching: The capability gap between small and large models is collapsing faster than anyone predicted. We’re hitting a point where model size becomes irrelevant for many specialized tasks—it’s all about training data and fine-tuning approach.

What I’d do with this: Start experimenting with smaller models for your security workflows immediately. Deploy them locally, run continuous scans without API costs, and build security tooling that doesn’t depend on external services. The moat isn’t model size anymore—it’s execution speed.

Get the daily signal in your inbox