Neuro-Symbolic AI: The Missing Link for Trustworthy Agents

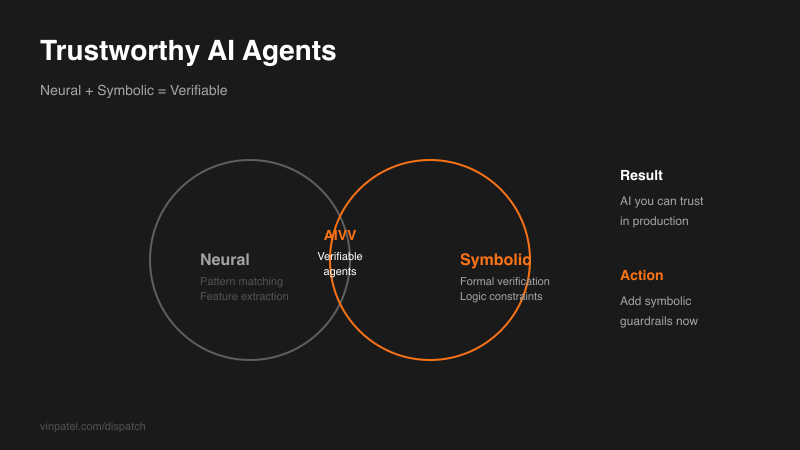

AIVV framework combines symbolic reasoning with LLMs to verify autonomous systems — a path toward AI you can trust in production.

The signal: Researchers introduced AIVV, a neuro-symbolic framework that integrates LLM agents with formal verification methods to validate autonomous systems before deployment.

Why it matters: We’re hitting the reliability wall with pure neural approaches. Your AI agent might work brilliantly in testing then fail catastrophically in production. AIVV tackles the core problem: verifying AI behavior in edge cases you never trained for.

The pattern I’m watching: The industry is pivoting toward hybrid architectures — neural networks plus symbolic reasoning. Pure end-to-end neural approaches are losing momentum among practitioners who need reliability guarantees.

What I’d do with this: Start experimenting with symbolic constraints in your AI projects. Even simple rule-based guardrails dramatically improve reliability. If you’re building anything safety-critical, dig into formal methods now — the companies that crack reliable AI verification first will own the enterprise market.

Get the daily signal in your inbox