MegaTrain Runs 100B Models on a Single GPU

MegaTrain enables full-precision training of 100B+ parameter LLMs on one GPU — democratizing large model development.

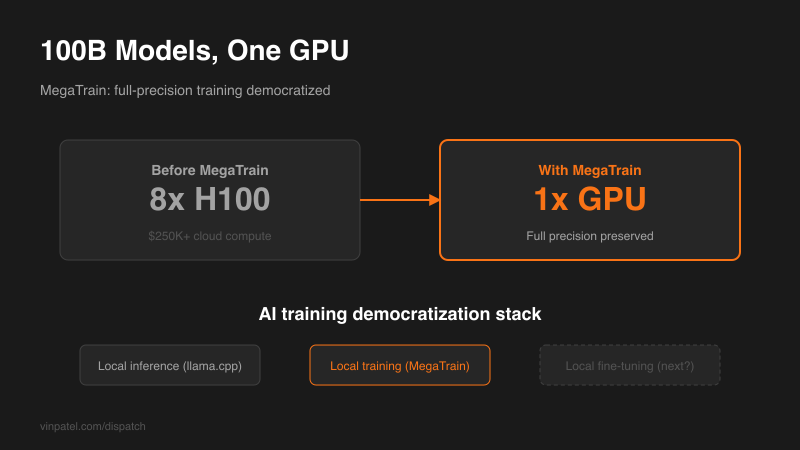

The signal: MegaTrain enables full-precision training of 100B+ parameter LLMs on a single GPU, eliminating the need for multi-node clusters.

Why it matters: Training large models has been gated by hardware access — you needed clusters of A100s or H100s. MegaTrain breaks that barrier with memory optimization techniques that keep full precision intact. Solo builders and small teams can now train competitive models without cloud compute bills in the six figures.

The pattern I’m watching: Democratization of AI infrastructure is accelerating. First local inference (Lemonade, llama.cpp), now local training. The gap between well-funded labs and indie builders is shrinking at every layer of the stack.

What I’d do with this: If you’ve been fine-tuning small models because you couldn’t afford large-scale training, revisit your constraints. Test MegaTrain on your existing hardware. Even if you’re not training 100B models, the memory optimization patterns are applicable to any training workflow.

Get the daily signal in your inbox