Hardware Attestation Could Lock Down the AI Stack Forever

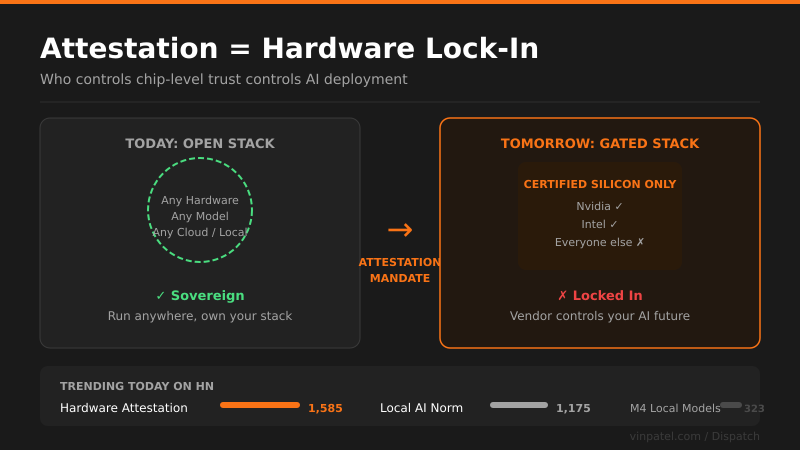

Hardware attestation is emerging as a quiet monopoly lever—whoever controls chip-level trust controls who gets to run AI at scale.

The signal: A Hacker News thread on hardware attestation is lighting up, with builders recognizing that chip-level trust mechanisms could hand a small number of hardware vendors permanent gatekeeping power over the entire AI stack.

Why it matters: If attestation becomes the standard for “trusted” AI inference—and regulators or enterprises start requiring it—you can only run compliant workloads on certified silicon. That’s not a software moat, it’s a hardware moat, and those are nearly impossible to route around.

The pattern I’m watching: This is running in parallel with the local AI surge—1,100+ people engaging with “Local AI needs to be the norm” on the same day. The community is connecting the dots: remote attestation + compliance requirements = the death of truly local, sovereign AI. The M4 Mac thread shows people are already stress-testing alternatives.

What I’d do with this: If you’re building products that handle sensitive data, architect now for hardware diversity—don’t let your inference layer get locked to a single vendor’s attestation scheme. Watch how the open-source firmware and RISC-V communities respond; that’s where the escape hatch gets built.

Get the daily signal in your inbox