Claude's System Prompt Changes Reveal Model Evolution Tactics

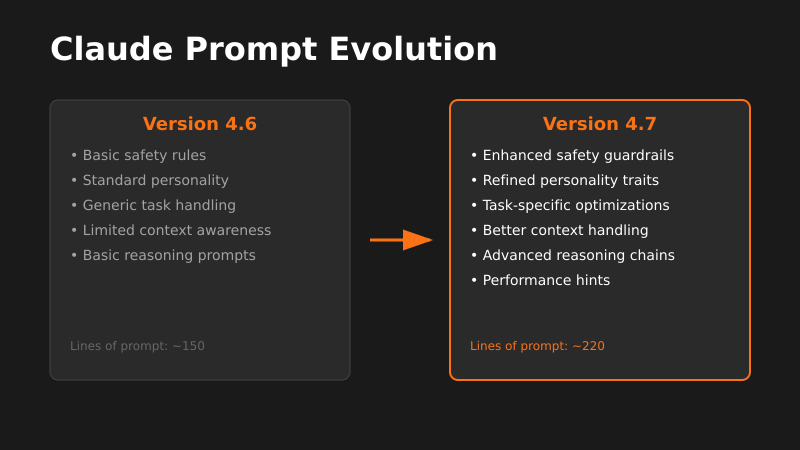

Claude Opus 4.6 to 4.7 system prompt changes show how AI labs iterate models through instruction tuning rather than architecture updates.

The signal: Developers reverse-engineered differences between Claude Opus 4.6 and 4.7 system prompts, revealing subtle but significant instruction changes.

Why it matters: This shows AI labs are rapidly iterating models through prompt engineering rather than costly retraining. Understanding these patterns helps you optimize your own prompts and predict model behavior shifts before they break your integrations.

The pattern I’m watching: We’re entering an era where model improvements happen through instruction tuning, not just parameter scaling. Labs are treating system prompts as product features, tweaking personality, safety guardrails, and task performance through careful prompt crafting.

What I’d do with this: Start version-controlling your prompts and A/B testing them like any other critical system component. Build prompt management infrastructure now—tools that let you swap prompts, track performance metrics, and rollback when models change unexpectedly under you.