ChatGPT 5.5 Pro Is Impressing Builders — But LLMs Still Corrupt Your Docs

ChatGPT 5.5 Pro is turning heads on Hacker News, but a parallel signal warns that delegating document work to LLMs introduces silent corruption risks.

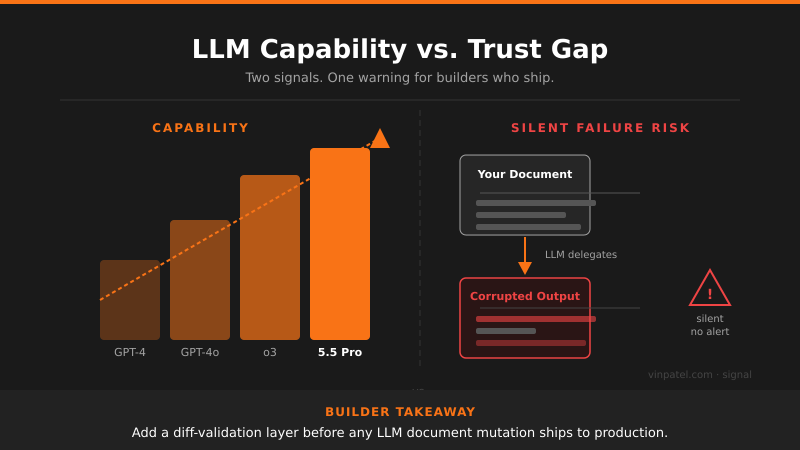

The signal: ChatGPT 5.5 Pro is generating serious buzz among developers on Hacker News, landing as the top engagement story while a companion thread warns that LLMs silently corrupt documents when given delegated editing tasks.

Why it matters: These two signals are in direct tension — the capability ceiling is rising fast, but so is the failure surface. If you’re shipping any product that lets LLMs read, edit, or transform user documents, you’re likely introducing quiet data integrity bugs that users won’t catch until it’s too late.

The pattern I’m watching: Every major capability jump in models gets followed by a rash of “wait, it does that?” failure discoveries. Claude Code’s HTML effectiveness shows models can be shockingly good at structured output — but unstructured document delegation is still a minefield. The gap between impressive demos and production-safe behavior is where products break.

What I’d do with this: Before delegating any document mutation to an LLM in your pipeline, add a diff-validation layer that flags structural changes the user didn’t explicitly request. Treat LLM document edits like you’d treat user input — never trust, always verify.

Get the daily signal in your inbox