AI Slop Is Poisoning the Communities You Depend On

AI-generated noise is degrading online communities that developers rely on for real signal — and builders need a strategy to adapt now.

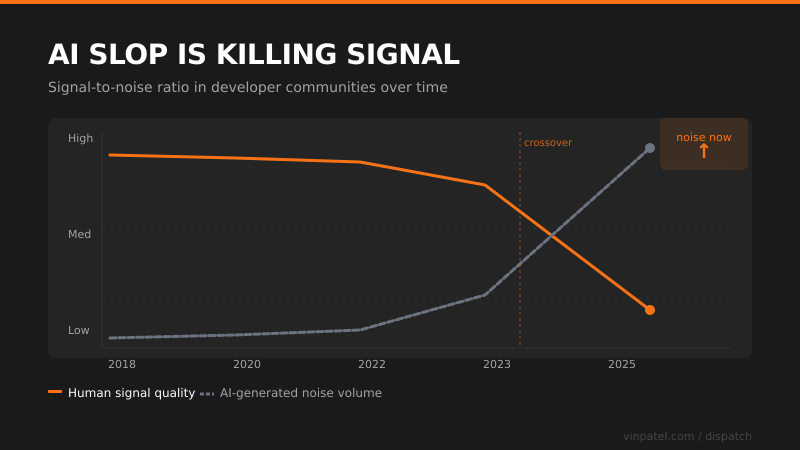

The signal: AI-generated slop is systematically degrading the quality of online communities — forums, comment threads, Q&A sites — the exact places developers go to solve hard problems.

Why it matters: The informal knowledge networks that make us faster — Stack Overflow, Reddit, niche Discord servers — depend on genuine human effort and trust. When LLM-generated filler floods those spaces, the signal-to-noise ratio collapses and the community loses its reason to exist.

The pattern I’m watching: This isn’t just a content moderation problem — it’s an infrastructure problem. The tacit knowledge layer of the internet is getting hollowed out at exactly the moment AI tools need high-quality human-generated data to stay useful. We’re in a feedback loop where AI trains on the web, degrades the web, then trains on the degraded version.

What I’d do with this: If you run a community or product with user-generated content, treat quality signals as a core feature right now — reputation systems, verified contributors, friction-as-a-feature. And personally, invest more in closed, curated networks of trusted practitioners rather than relying on public forums where the humans are increasingly outnumbered.

Get the daily signal in your inbox