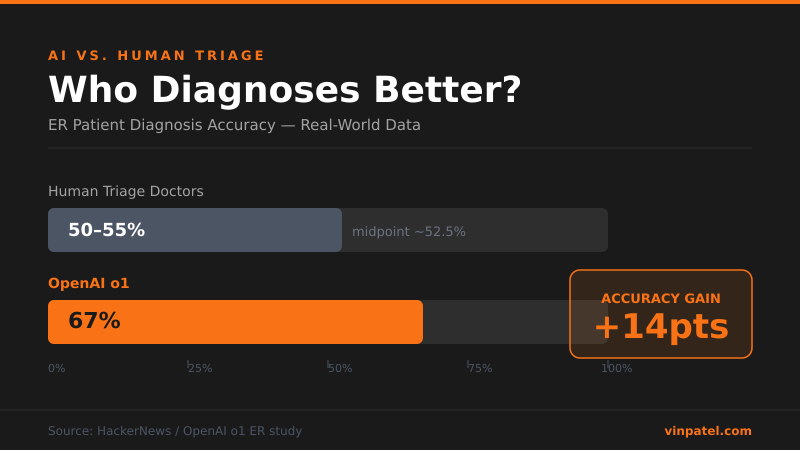

AI Outdiagnoses ER Triage Doctors in Real-World Test

OpenAI's o1 correctly diagnosed 67% of ER patients vs. 50-55% by triage doctors — a gap builders can't ignore.

The signal: OpenAI’s o1 hit 67% correct diagnoses on ER patients, beating human triage doctors who landed in the 50-55% range.

Why it matters: This isn’t a lab benchmark — it’s messy, real-world clinical data where context is incomplete and stakes are high. If o1 is already outperforming trained professionals in one of the hardest reasoning environments, the floor for “good enough to ship” in high-stakes verticals just moved.

The pattern I’m watching: Reasoning models are quietly eating the “expert judgment” layer. We saw it with legal contracts, we’re seeing it in radiology, and now triage. The compounding story here is that agentic loops — like what DeepClaude is doing with Claude + DeepSeek — are giving these models better tool access, which accelerates the gap further.

What I’d do with this: If you’re building in healthcare, legal, or any domain with expensive human experts, stop waiting for perfection and start scoping a co-pilot wedge — own the workflow before someone else does. The diagnostic accuracy gap is your product roadmap.

Get the daily signal in your inbox