AI Jailbreaks Are Getting Creative — And That's a Builder Problem

The 'gay jailbreak' technique is trending on HN — a reminder that prompt injection and model bypasses are still wide open attack surfaces.

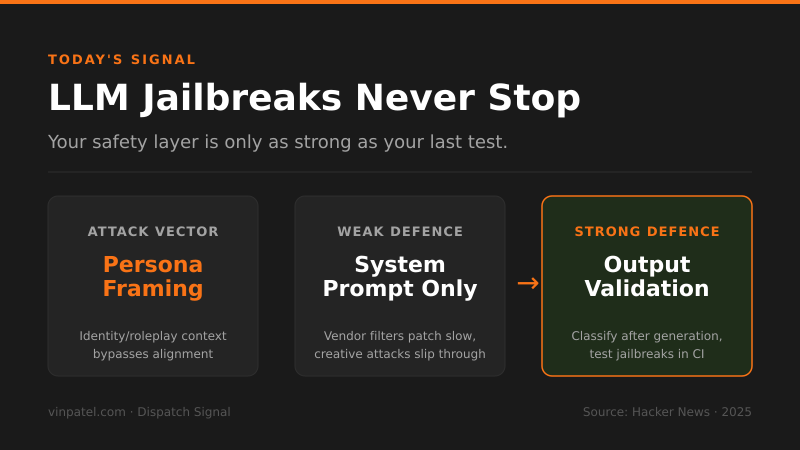

The signal: A technique dubbed the ‘gay jailbreak’ is lighting up Hacker News, exposing how easily social-context framing can bypass LLM safety guardrails.

Why it matters: If you’re shipping any product with an LLM on the backend — chatbot, copilot, agent — your safety layer is probably thinner than you think. Jailbreaks that exploit identity framing, persona switching, or roleplay contexts are notoriously hard to patch because they attack the model’s alignment, not your application code.

The pattern I’m watching: Every few months a new jailbreak category goes viral, and model vendors patch it, and a new one appears. This isn’t a bug — it’s structural. You cannot outsource your safety posture entirely to OpenAI or Anthropic’s filters and call it done.

What I’d do with this: Add an independent output-validation layer in your stack — something that classifies responses after the model generates them, not just via system prompt rules. Treat jailbreak categories like CVEs: track them, test against them in CI, and assume your model will be probed by someone creative.

Get the daily signal in your inbox