AI Code Tools Hit Real Codebases — and Medical AI Hits a Wall

Claude Code gains traction in large repos while Ontario's audit reveals AI medical scribes failing on basic facts — two very different deployment realities.

The signal: Two AI coding and documentation tools are getting real-world stress tests this week — Claude Code in large codebases and AI medical note-takers in Ontario clinics — with very different results.

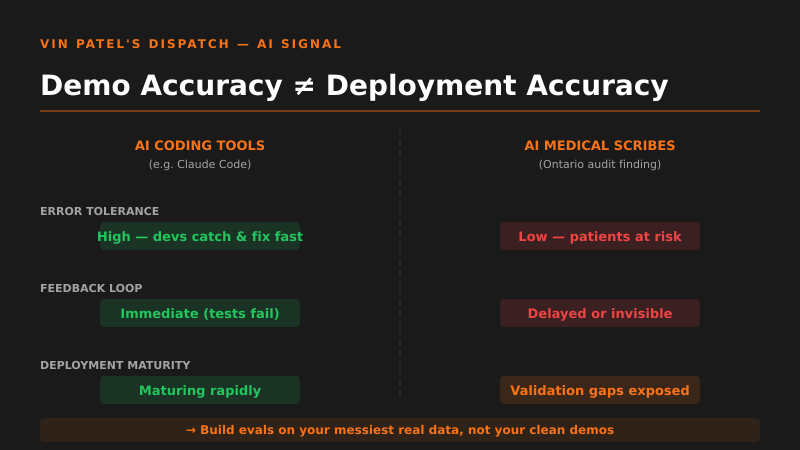

Why it matters: Claude Code’s approach to navigating large repos (context management, tool use, incremental reasoning) is worth studying if you’re building any agent that touches real production code — not toy projects. Meanwhile, Ontario auditors finding that medical AI scribes “routinely blow basic facts” is a loud reminder that demo accuracy and deployment accuracy are completely different animals.

The pattern I’m watching: There’s a hard split forming between AI tools that work in controlled, well-structured environments and those that degrade badly under real-world noise and complexity. Coding assistants are maturing faster because developers can immediately catch and correct errors. High-stakes domains like healthcare can’t absorb that error rate.

What I’d do with this: If you’re shipping an AI product, build your evals around your messiest, most chaotic real data — not your clean examples. The medical scribe failure isn’t an AI problem, it’s a deployment and validation process problem that any vertical SaaS builder can learn from right now.

Get the daily signal in your inbox